Understanding the Einstein GPT Trust Layer

Estimated reading time: 4 minutes

Trust is at the heart of Salesforce‘s mission, and it’s always been their top priority. Back when they were trailblazing in the world of Customer Relationship Management (CRM), Salesforce® stood out by being one of the first to openly share system statuses with their customers. You could easily check the performance of your Salesforce Org by visiting trust.salesforce.com. Now, as Salesforce ventures into the realm of Artificial Intelligence (AI), trust takes on an even greater significance. They’ve introduced on-platform AI tools like Apex GPT and closely integrated GPT solutions from partners like OpenAI, all designed to enhance your experience with Salesforce while maintaining trust and reliability.”

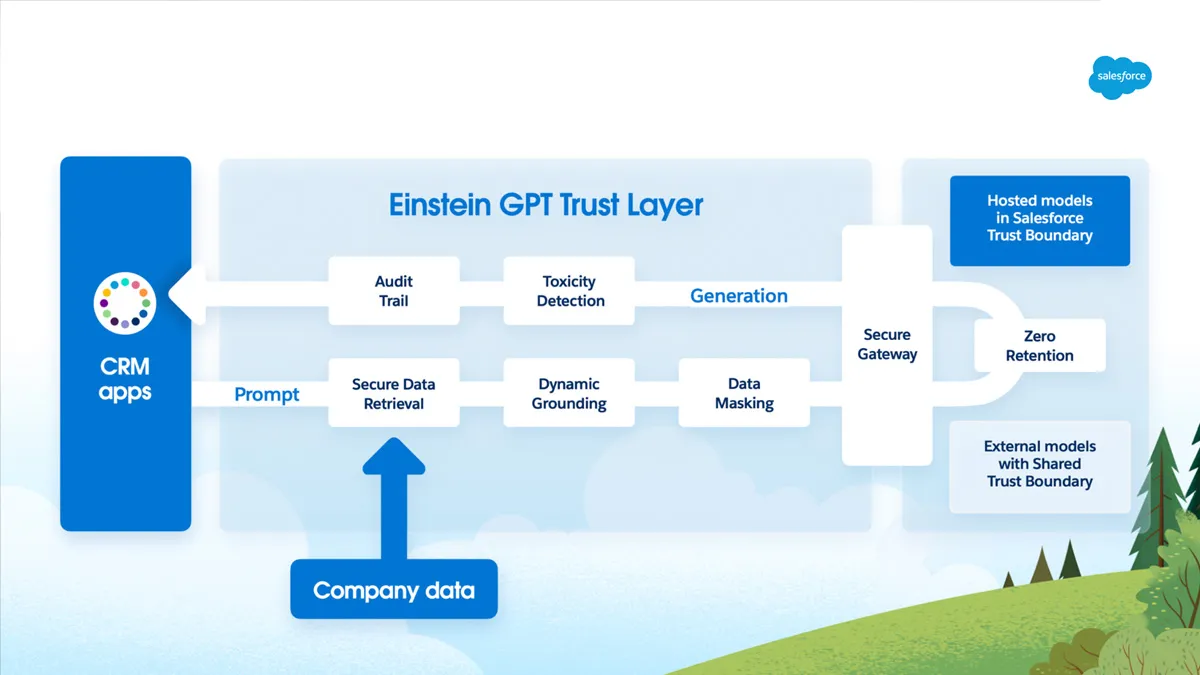

What sets Salesforce apart in the AI landscape is their unique advantage – they already have your company’s vital data within the Salesforce Platform, and they make it easy for you to tap into external data through Data Cloud. This means that Salesforce can now harness the power of AI while safeguarding your data. They achieve this through the Einstein GPT Trust Layer, and in this Salesforce Blog, we’re going to explore some of its standout features.

(Image courtesy of Salesforce.com)

Secure Data Retrieval

Salesforce has a robust Security Model that companies are able to leverage for their CRM and Data Cloud. Einstein GPT works with your existing Security Model to ensure that a User is not given results to their Prompts that references Data their User does not have access to. This means that you can rest assured your Org’s Security is taken into account with all Einstein GPT interactions.

Zero Retention

Nobody wants to worry about another company using their data, so Salesforce solves that for us with their Zero Retention policy. Salesforce has created Partnership with third-party LLM providers for many of the Einstein GPT use cases to aid in this Zero Retention policy by creating legal agreement with OpenAI means that Prompts from Salesforce will not be storing any Prompts by OpenAI and no prompts will be used in their Training Models or Monitoring.

Prompt Defense and Prompt Masking

Salesforce leverages their models to identify sensitive parameters to determine what is sensitive information and masks.

- To mask sensitive Prompt data, Salesforce replaces sensitive information with an alphanumeric key before sending the prompt out, and then on receiving the prompt’s response it unmasks the sensitive data.

- Even though there is no Data Retention by the third-party providers, the Prompts are still being masked for an extra layer of security.

- Once the response has been returned, they replace the alphanumeric tokens with the corresponding value so that it has the correct response for the customers.

Additional Trusted GPT Options

Apex GPT can generate Apex Code and Apex Test Classes. This uses Code Gen that was built by Salesforce and lives inside Salesforce, giving you the native Trust that everyone has with their Salesforce Org.

Summary

By leveraging its reputation in Customer Relationship Management (CRM), Salesforce offers AI solutions that safeguard user data. The Einstein GPT Trust Layer ensures secure data retrieval by aligning with the existing Security Model, preventing unauthorized access to sensitive data. Salesforce’s Zero Retention policy assures that data prompts are not stored or used in training models. Additionally, Salesforce implements prompt defense and masking for added security, and customers have the flexibility to bring their own GPT models if they meet stringent security and privacy criteria.

How AdVic Can Help

Regardless of a company’s size or industry, the AdVic® Team provides tailored guidance and support to ensure that businesses can harness the power of AI and GPT and gain the critical insights necessary for their growth and competitive advantage.

Why not book a meeting with us today and we can get working on your solution today!

Related Resources:

Unlocking the Potential of AI and GPT: A Business Primer

Harness the Power of Data with Salesforce Analytics Cloud & GPT-Powered Tools

Salesforce Introduces Generative AI Tools for Marketers

Subscribe to the AdVic Salesforce Blog on Feedly: